The presented mobile robot was equipped with the autonomous navigation system in ROS framework, mainly based on data from Microsoft Kinect sensor. The robot has four independently driven wheels and is rather small. The width and length are about 35 cm, height with the Kinect sensor is below 50 cm. The pictures show the real robot and visualizations of it.

The first version of the robot without an autonomous navigation system was described in the following page: Four wheeled mobile robot with Raspberry Pi. It was equipped with a Raspberry Pi computer, a set of sensors, and four DC brushed motors from Pololu.

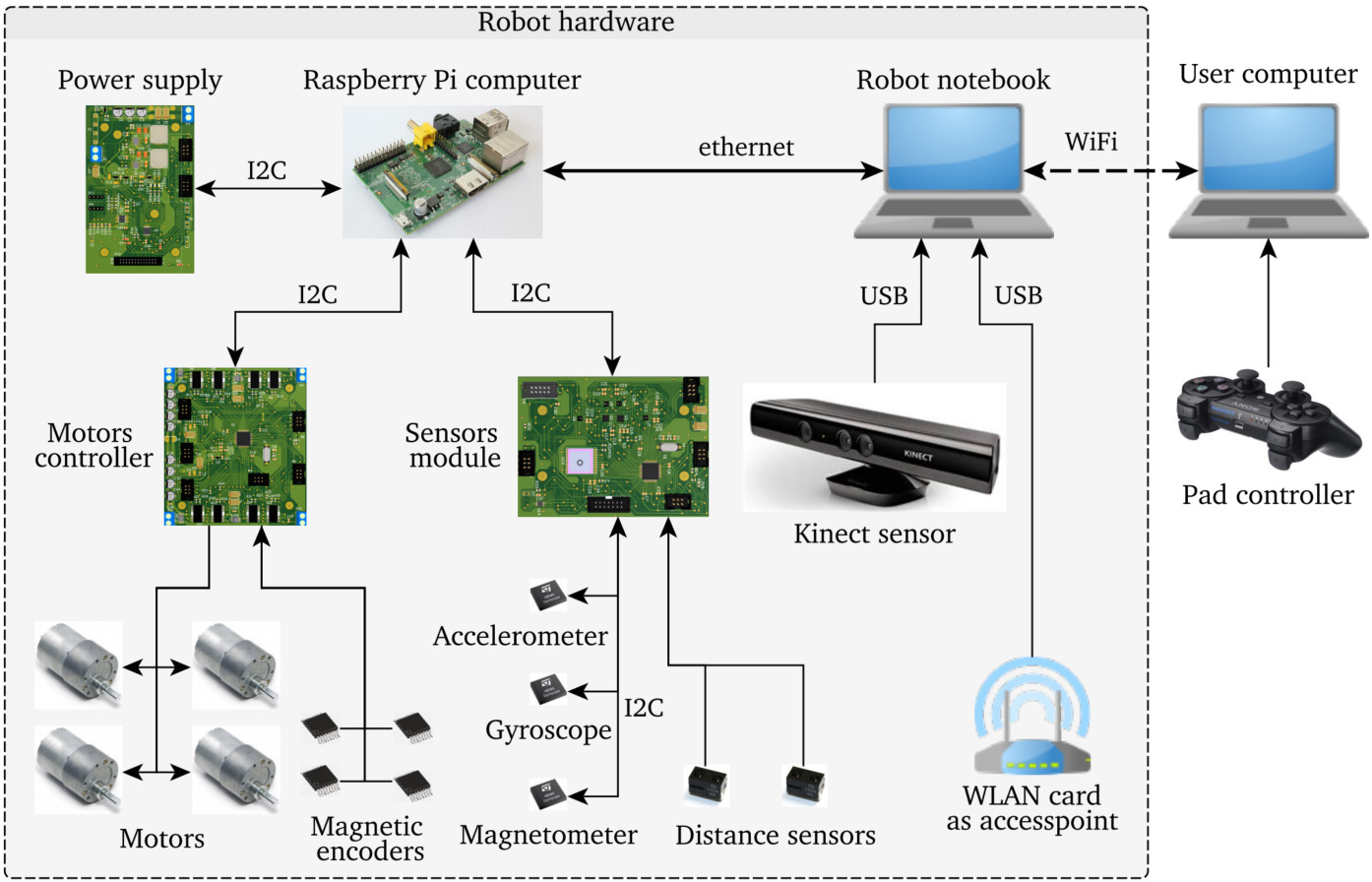

For the autonomous system purposes, the mobile platform was expanded by an additional computer (temporary a notebook with Intel i3 and 4 GB RAM) and the Microsoft Kinect sensor with mounting elements. The diagram shows the hardware architecture of the robot.

The architecture of high-level control system of mobile robot

The control system of the robot has a layered structure and was realized with a few computations units. The one of requirements for a low-level control system was real-time operations. So, all time-sensitive algorithms were running on the ARM based microcontroller. Many of the used solutions were described in a previous entry. The high-level part of the control system doesn’t need to fulfill hard real-time requirements so the non-real-time interfaces were used like ethernet or a USB. The below diagram shows the architecture of the control system.

The high-level layer of the control system was realized in the ROS framework. This development environment provides mechanisms for software nodes communication and synchronization. Moreover, ROS allows to easy run control algorithms on several computers. So, part of the control system was run on Raspberry Pi and the other part on a notebook. Components were realized as nodes that communicate with each other by a mechanism called topics. The control system consists of the following components:

- PS3 Pad teleoperation

- Keyboard teleoperation

- Velocities filter

- Velocities multiplexer

- User application with rviz

- Localization filter

- Hardware driver

- Kinect OpenNI

- Navigation

- Laser scan from Kinect

PS3 Pad teleoperation

The component allows to manual control of the mobile robot with Sony DualShock 3 pad. It was implemented deadman button for safety purposes.

Keyboard teleoperation

The component allows to control the platform with defined keyboard keys. It is realized as a Python script where the keys function can be determined.

Velocities multiplexer

The velocities multiplexer component allows to switch between velocities commands sources for example autonomous navigation or manual control.

Velocities filter

The component filters velocities send to a low-level controller. It allows to bound a velocities and accelerations of mobile platform.

Hardware driver

The dedicated driver for hardware components of the robot. It gets data from the sensors module, motors controller and publishes them to ROS topics. The functions of the driver are as following:

- sending velocities commands from topic /cmd_vel to low-level motors controller,

- getting odometry data which contains relative position and orientation of robot from the low-level controller. Then it publishes that data on topic /wheels_odom,

- downloading data from sensors module what mean from the inertial sensor, magnetometers, and proximity sensors. Also publishing data to following topics: /imu/raw_data i /imu_mag.

The component is running on Raspberry Pi and communication with lower-level components based on I2C interface. It was written in C++ and it was used bcm2835 library for processor Broadcom BCM 2835.

User application with rviz

The software allows controlling platforms by the user. It is based on ROS visualization tool — rviz. It displays a robot surrounding map, image from Kinect RGB camera and provides features to set autonomous navigation goals.

Localization filter

To improve odometry localization results it was used inertial measurement unit (IMU). The data fusion based on Extended Kalman Filter (EKF).

Kinect Openni

The OpenNI driver for Kinect. It allows to get from Kinect sensor data like a depth image or RGB image. The data are published to specific topics.

Laser scan from Kinect

The software converts a depth image from the Kinect sensor to 2D laser scan message (sensor_msgs/LaserScan). Additionally, it removes the ground from the depth image and compensates the tilt angle of the sensor. The component was published as part of a depth_nav_tools package on the ROS page.

Navigation

Reconfigured standard ROS package for navigation tasks.

Tests of the autonomous navigation system

The below pictures shows graphical interface which visualizes mobile robot and map of the surrounding.

The tests show that it is possible to create a map and autonomous navigation only with the Microsoft Kinect sensor. But it should be noticed that the Kinect sensor has some drawbacks in that application like a small field of view angles and not sufficient range. It makes that robot has sometimes problems with properly localization on the map.

Can you share the name of motor driver for this robot?

Yes, sure. I’ve used the following motors driver:

http://mdrwiega.com/2016/05/11/dc-motors-controller-for-mobile-platforms-with-ros-support/